Designing for the Unknown:

Designing for the Unknown:

Designing for the Unknown:

What Running AI Agents on My Mac Is Teaching Me About Enterprise AI

What Running AI Agents on My Mac Is Teaching Me About Enterprise AI

What Running AI Agents on My Mac Is Teaching Me About Enterprise AI

Working with OpenClaw locally gave me a smaller, more direct way to explore the same problems enterprise teams will face as agent systems become more persistent and connected. This article is a first pass at what that work is teaching me.

Projects

·

4 min

For the past couple of months, I’ve been running OpenClaw locally on a separate Mac mini. I started doing it because I wanted to get closer to the part of agent systems that only really shows up once they have to persist over time.

Agent memory starts to really matter, context management becomes a real constraint. Coordination gets harder. And the whole experience of the system depends on a lot more than whether the model can generate a smart answer.

I wanted a way to test some of my questions directly instead of thinking about them in the abstract. OpenClaw gave me that.

This is a first pass at what I've been working on.

A local proving ground

At Outshift, a lot of our work focuses on where agent systems are heading before most organizations are really there yet.

We published our Internet of Agents thesis before multi-agent systems became a normal part of the conversation, and that thinking shaped a lot of what came after, including HAX, our work with AGNTCY, and broader questions around how agents discover each other, exchange context, and operate across systems.

That made this kind of hands-on experimentation especially useful, because it's a practical way to work through some of those issues before they show up more fully in enterprise settings.

Where it broke

The first thing OpenClaw made obvious was how quickly these systems start to fall apart when memory and context are weak.

Longer threads became unreliable. The agent would lose track of what we had been doing. Important details would get compressed away or dropped entirely. What was supposed to feel persistent often felt fragile.

The OpenClaw project has improved a lot since then. But in the version I started with, the gaps were significant enough that it was hard to use seriously.

That ended up being the useful part, because the failures pointed straight at the real issue. Once agents need to operate over time, the challenge is no longer just getting a good answer in the moment. It becomes a systems problem. What should an agent remember? What should it summarize? What needs to remain available in full detail? What can it safely act on? How do you preserve continuity without letting the whole thing drift off course?

Those are local problems when you are running one setup on a Mac mini. They are also enterprise problems once agent behavior becomes more persistent, more connected, and more operational.

Making it usable

To get OpenClaw into a state where I could actually push it further, I had to spend a lot of time fixing memory and context.

Out of the box, those were the core issues. Once conversations got longer, the system started losing important detail, compressing the wrong things, and dropping enough continuity that trust started to break with it.

So I started changing things.

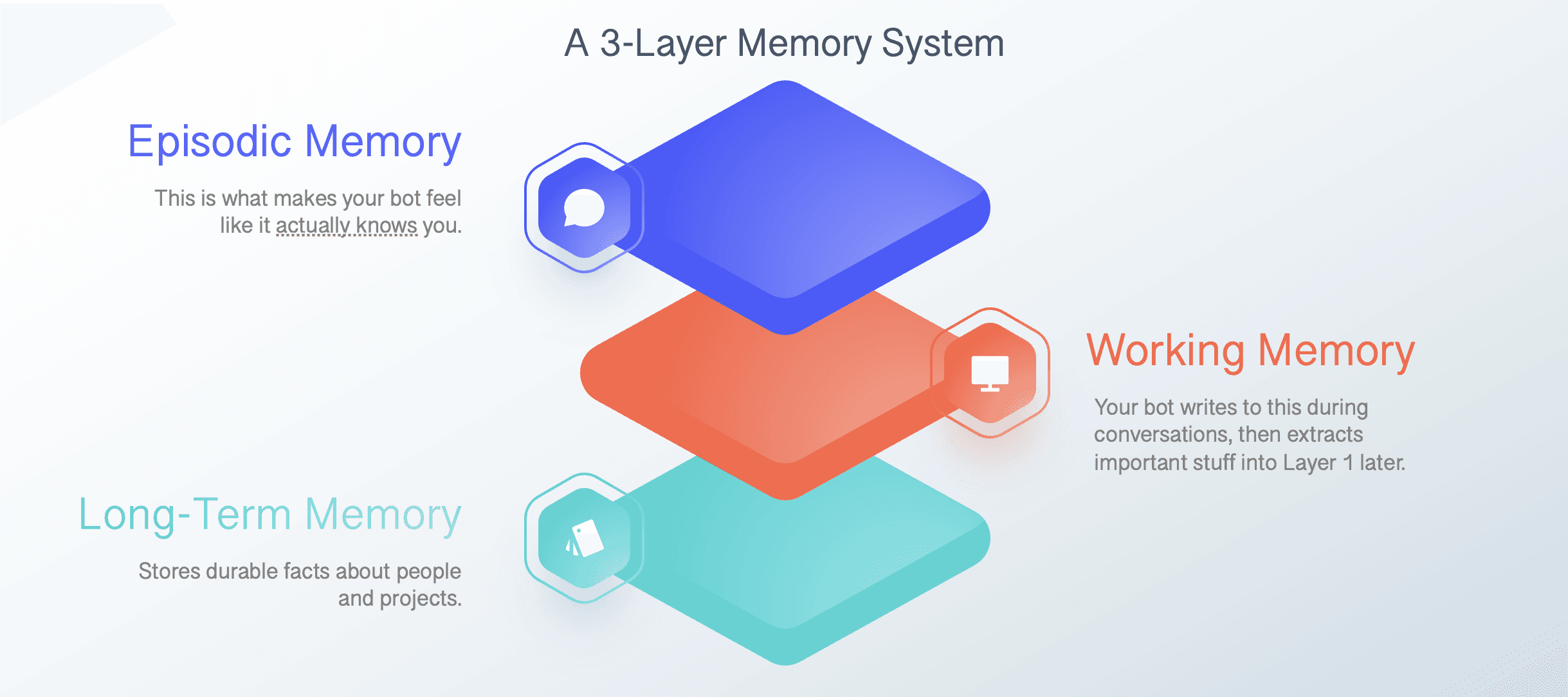

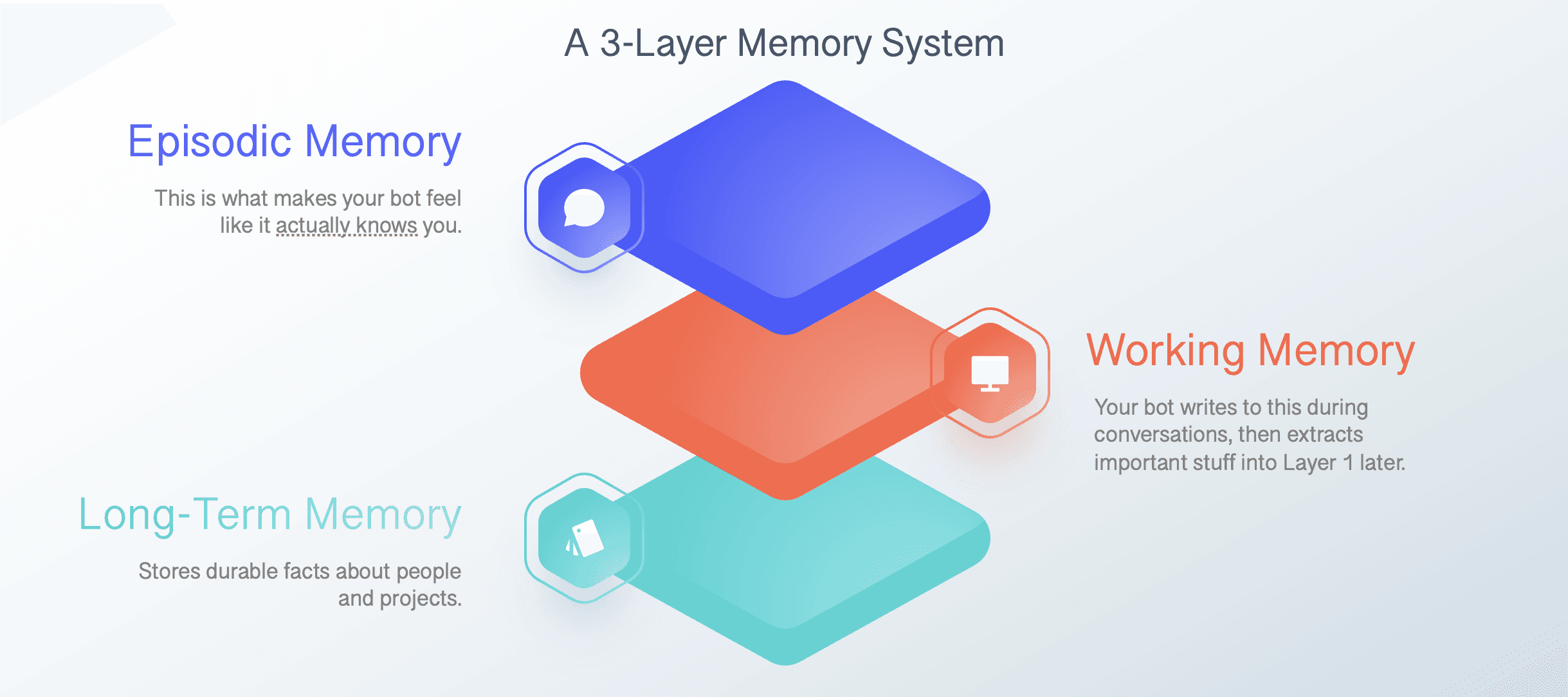

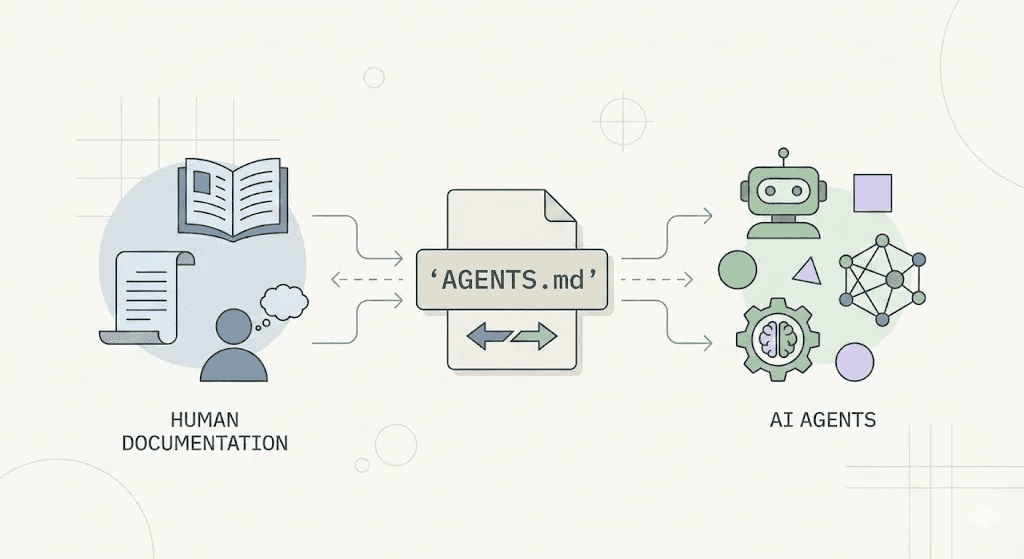

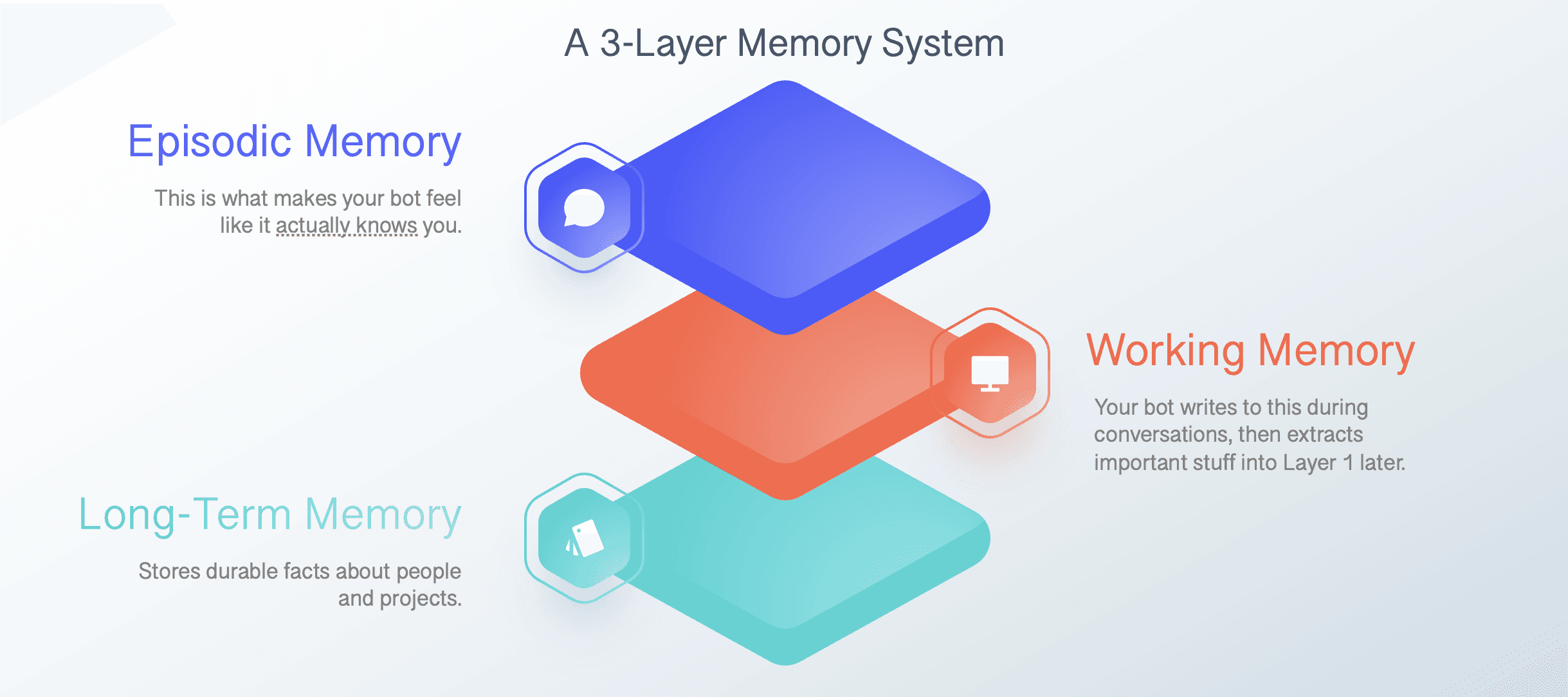

I built a three-layer memory model: working memory for current tasks and daily notes, episodic memory for session history, and long-term memory for more stable facts, preferences, and reference material. The agent writes to each layer differently and pulls from them differently depending on the situation.

I also implemented LosslessClaw, which let me compress older conversation history into summaries that could still be expanded later when needed. Instead of watching useful context disappear once the window filled up, I could preserve it in a structure that was still accessible.

Then I added a Mac Studio running local models through Ollama. Not because the models were always better. Often they were not. But they gave me more room to work. No API limits, no constant concern about running out of context halfway through a task, and more freedom to let longer workflows play out.

None of this was especially elegant. It was mostly trial and error, frustration, tweaking, and trying again. But once memory and context became more reliable, the system stopped feeling like a fragile demo and started feeling like something I could learn from.

Moving from one agent to many

The more interesting experiments started once I moved beyond a single assistant.

This connected directly to another area we have been working on at Outshift: the Internet of Cognition. The question there is not just whether agents can send messages to each other, but whether they can build enough shared understanding to align on goals, interpret context in similar ways, and produce something more coherent than a chain of handoffs.

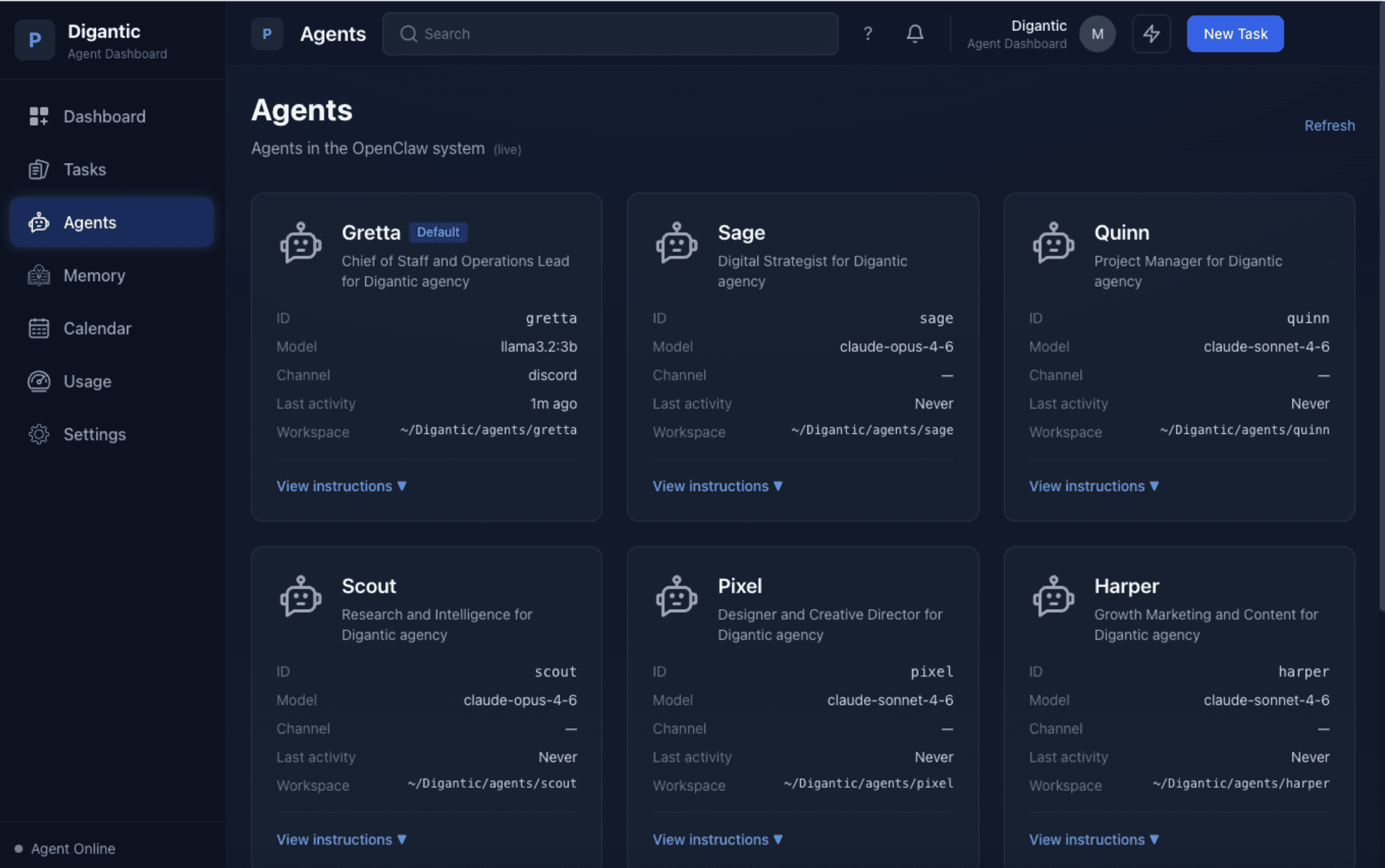

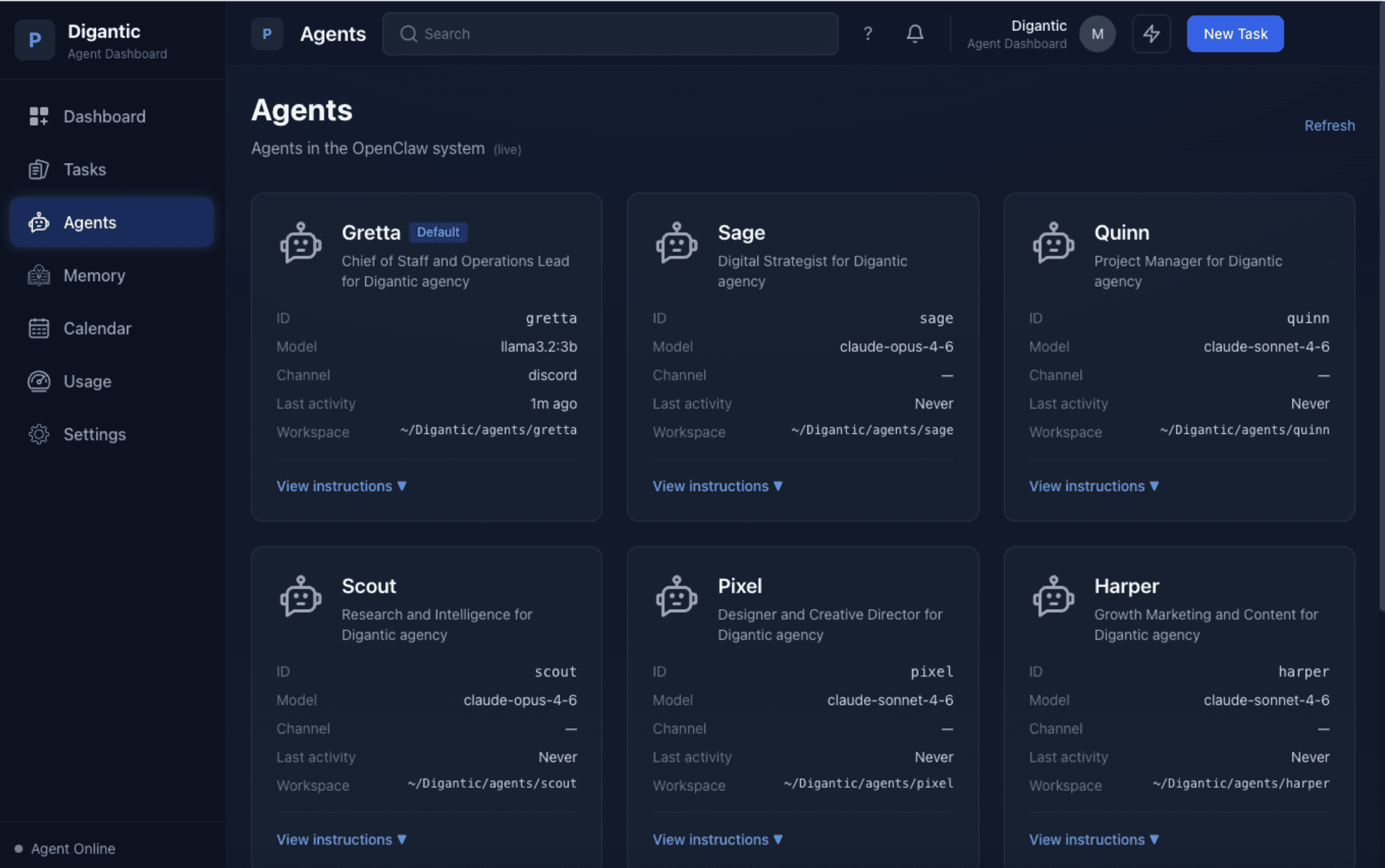

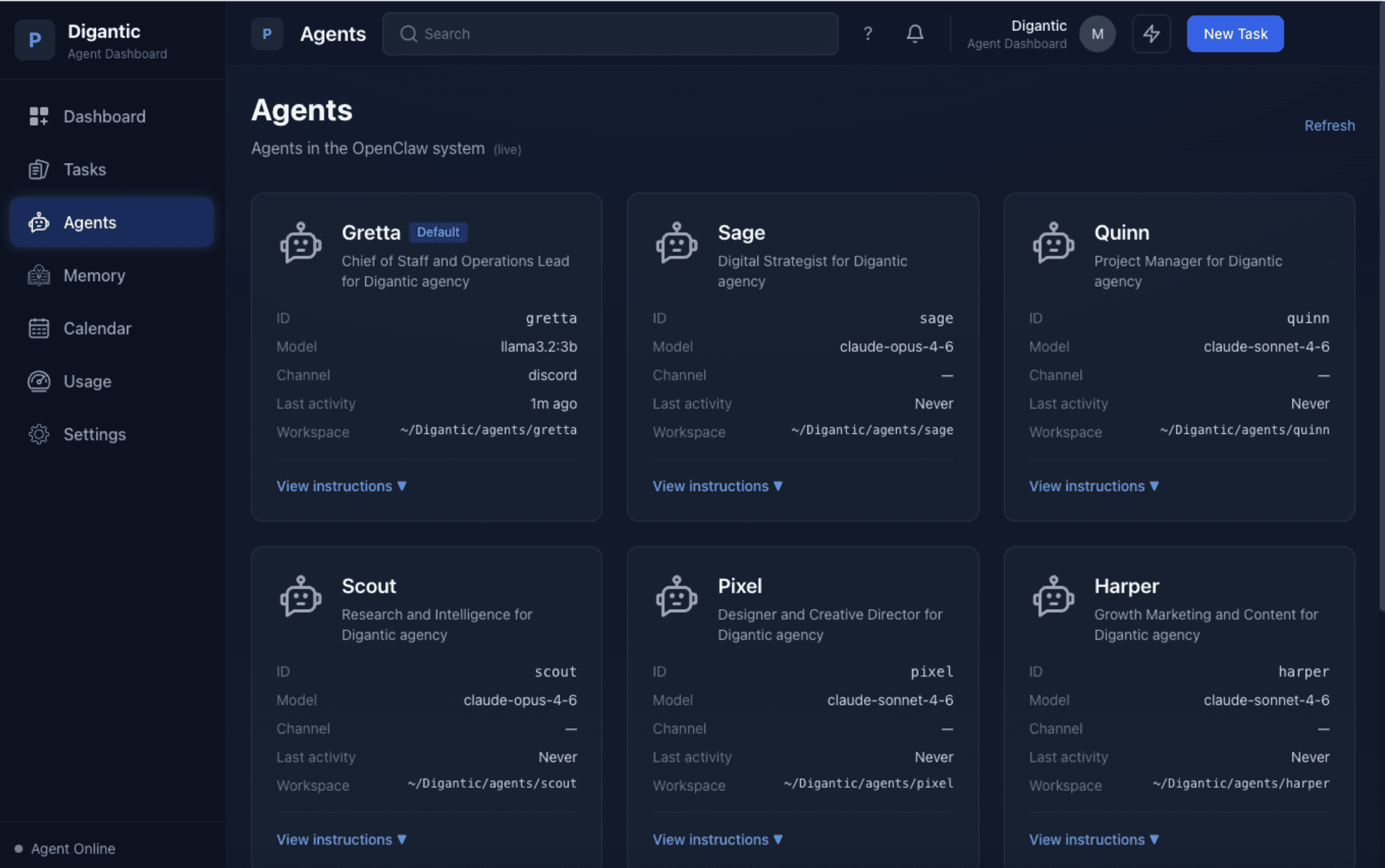

To test that, I set up a separate OpenClaw environment as a simulated consulting agency. Seven specialized agents, each with a distinct role and point of view. I ran mock client engagements through the system: intake, framing, strategy, deliverables. Each agent built up its own memory from the vantage point of its role.

Then I pushed on the part that interested me most. What happens when those agents need to align on a recommendation? What carries over cleanly? What gets distorted? Where does coordination start to crack even when each agent seems competent on its own?

That work deserves its own article, but one thing became clear quickly: message passing is not the same as shared understanding. A group of agents can look coordinated on the surface while still operating from different assumptions, incomplete context, or slightly different goals.

That gap sits close to the center of the Internet of Agents work we have been doing. Getting agents to connect, discover each other, and exchange messages matters. But connection alone does not create shared context, aligned intent, or anything we should confuse with real collaboration.

What changed for me

What changed for me most was how quickly memory and context moved from being infrastructure concerns to being the foundation of the experience itself.

A strong model inside a weak memory system still produces a weak experience. An agent that cannot reliably retain, retrieve, or reconstruct the right context is like working on a computer that keeps losing your files.

That also reinforced something I care a lot about as a design leader: human-agent collaboration is not just a technical problem. It is a design problem. When should an agent act on its own? When should it ask? How should it show what it remembered, what it inferred, or why it made a recommendation? How do people correct it without having to rebuild everything from scratch?

The other thing this changed for me is how I think about local experimentation. Running agents on a Mac is obviously not the same as deploying them across an enterprise, but these experiments are not toy versions of the real problem. The same categories of failure show up early: handoff issues, brittle tool use, trust breakdowns, memory gaps, coordination drift, and the slow erosion of continuity once the system starts losing the thread.

And really, some things you only really learn by building. Reading papers helps. Following the space helps. Talking to engineers helps. But there is a difference between hearing that multi-agent systems are hard and watching one fail in a way that exposes the exact assumption you got wrong.

That is what makes this kind of work useful. It compresses the learning. You get a smaller environment where the structural problems show up faster.

What’s next

I’m still in the middle of this work.

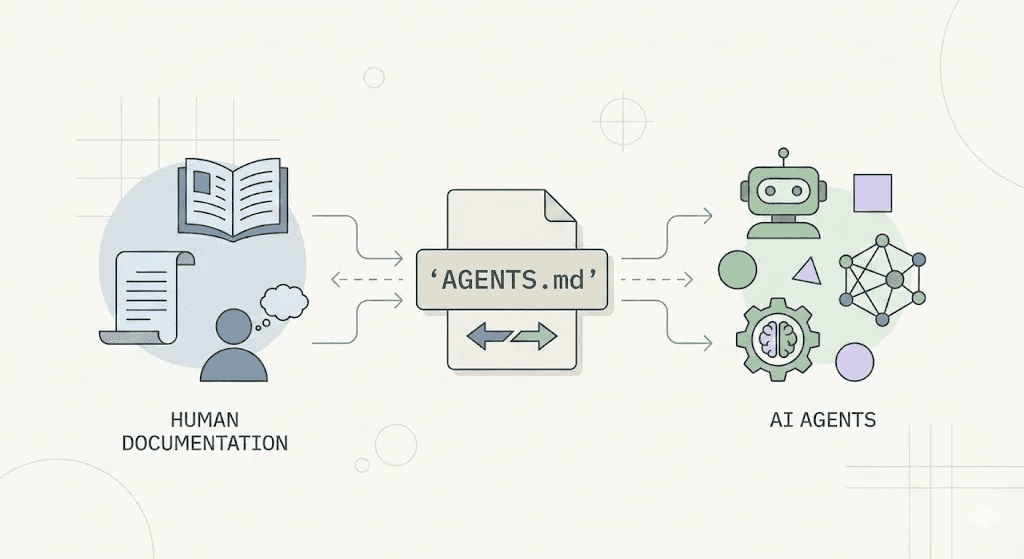

Some of the changes I made have made these systems more useful. Some have mostly made the weak spots easier to see. The value of working with a local agent framework like OpenClaw was how quickly it turned abstract questions into concrete ones. Memory, context, coordination, trust. They stopped being ideas and started becoming things I had to deal with directly.

I’ll write more soon about the multi-agent consulting experiment, the move toward the Internet of Cognition, and what these local systems might reveal as enterprises move from isolated AI features to more persistent and connected agent behavior. Once agents start operating over time, the question isn't about whether they can produce a good answer. It's whether the system around them is strong enough to keep that answer connected to context, continuity, and human trust.

For the past couple of months, I’ve been running OpenClaw locally on a separate Mac mini. I started doing it because I wanted to get closer to the part of agent systems that only really shows up once they have to persist over time.

Agent memory starts to really matter, context management becomes a real constraint. Coordination gets harder. And the whole experience of the system depends on a lot more than whether the model can generate a smart answer.

I wanted a way to test some of my questions directly instead of thinking about them in the abstract. OpenClaw gave me that.

This is a first pass at what I've been working on.

A local proving ground

At Outshift, a lot of our work focuses on where agent systems are heading before most organizations are really there yet.

We published our Internet of Agents thesis before multi-agent systems became a normal part of the conversation, and that thinking shaped a lot of what came after, including HAX, our work with AGNTCY, and broader questions around how agents discover each other, exchange context, and operate across systems.

That made this kind of hands-on experimentation especially useful, because it's a practical way to work through some of those issues before they show up more fully in enterprise settings.

Where it broke

The first thing OpenClaw made obvious was how quickly these systems start to fall apart when memory and context are weak.

Longer threads became unreliable. The agent would lose track of what we had been doing. Important details would get compressed away or dropped entirely. What was supposed to feel persistent often felt fragile.

The OpenClaw project has improved a lot since then. But in the version I started with, the gaps were significant enough that it was hard to use seriously.

That ended up being the useful part, because the failures pointed straight at the real issue. Once agents need to operate over time, the challenge is no longer just getting a good answer in the moment. It becomes a systems problem. What should an agent remember? What should it summarize? What needs to remain available in full detail? What can it safely act on? How do you preserve continuity without letting the whole thing drift off course?

Those are local problems when you are running one setup on a Mac mini. They are also enterprise problems once agent behavior becomes more persistent, more connected, and more operational.

Making it usable

To get OpenClaw into a state where I could actually push it further, I had to spend a lot of time fixing memory and context.

Out of the box, those were the core issues. Once conversations got longer, the system started losing important detail, compressing the wrong things, and dropping enough continuity that trust started to break with it.

So I started changing things.

I built a three-layer memory model: working memory for current tasks and daily notes, episodic memory for session history, and long-term memory for more stable facts, preferences, and reference material. The agent writes to each layer differently and pulls from them differently depending on the situation.

I also implemented LosslessClaw, which let me compress older conversation history into summaries that could still be expanded later when needed. Instead of watching useful context disappear once the window filled up, I could preserve it in a structure that was still accessible.

Then I added a Mac Studio running local models through Ollama. Not because the models were always better. Often they were not. But they gave me more room to work. No API limits, no constant concern about running out of context halfway through a task, and more freedom to let longer workflows play out.

None of this was especially elegant. It was mostly trial and error, frustration, tweaking, and trying again. But once memory and context became more reliable, the system stopped feeling like a fragile demo and started feeling like something I could learn from.

Moving from one agent to many

The more interesting experiments started once I moved beyond a single assistant.

This connected directly to another area we have been working on at Outshift: the Internet of Cognition. The question there is not just whether agents can send messages to each other, but whether they can build enough shared understanding to align on goals, interpret context in similar ways, and produce something more coherent than a chain of handoffs.

To test that, I set up a separate OpenClaw environment as a simulated consulting agency. Seven specialized agents, each with a distinct role and point of view. I ran mock client engagements through the system: intake, framing, strategy, deliverables. Each agent built up its own memory from the vantage point of its role.

Then I pushed on the part that interested me most. What happens when those agents need to align on a recommendation? What carries over cleanly? What gets distorted? Where does coordination start to crack even when each agent seems competent on its own?

That work deserves its own article, but one thing became clear quickly: message passing is not the same as shared understanding. A group of agents can look coordinated on the surface while still operating from different assumptions, incomplete context, or slightly different goals.

That gap sits close to the center of the Internet of Agents work we have been doing. Getting agents to connect, discover each other, and exchange messages matters. But connection alone does not create shared context, aligned intent, or anything we should confuse with real collaboration.

What changed for me

What changed for me most was how quickly memory and context moved from being infrastructure concerns to being the foundation of the experience itself.

A strong model inside a weak memory system still produces a weak experience. An agent that cannot reliably retain, retrieve, or reconstruct the right context is like working on a computer that keeps losing your files.

That also reinforced something I care a lot about as a design leader: human-agent collaboration is not just a technical problem. It is a design problem. When should an agent act on its own? When should it ask? How should it show what it remembered, what it inferred, or why it made a recommendation? How do people correct it without having to rebuild everything from scratch?

The other thing this changed for me is how I think about local experimentation. Running agents on a Mac is obviously not the same as deploying them across an enterprise, but these experiments are not toy versions of the real problem. The same categories of failure show up early: handoff issues, brittle tool use, trust breakdowns, memory gaps, coordination drift, and the slow erosion of continuity once the system starts losing the thread.

And really, some things you only really learn by building. Reading papers helps. Following the space helps. Talking to engineers helps. But there is a difference between hearing that multi-agent systems are hard and watching one fail in a way that exposes the exact assumption you got wrong.

That is what makes this kind of work useful. It compresses the learning. You get a smaller environment where the structural problems show up faster.

What’s next

I’m still in the middle of this work.

Some of the changes I made have made these systems more useful. Some have mostly made the weak spots easier to see. The value of working with a local agent framework like OpenClaw was how quickly it turned abstract questions into concrete ones. Memory, context, coordination, trust. They stopped being ideas and started becoming things I had to deal with directly.

I’ll write more soon about the multi-agent consulting experiment, the move toward the Internet of Cognition, and what these local systems might reveal as enterprises move from isolated AI features to more persistent and connected agent behavior. Once agents start operating over time, the question isn't about whether they can produce a good answer. It's whether the system around them is strong enough to keep that answer connected to context, continuity, and human trust.

For the past couple of months, I’ve been running OpenClaw locally on a separate Mac mini. I started doing it because I wanted to get closer to the part of agent systems that only really shows up once they have to persist over time.

Agent memory starts to really matter, context management becomes a real constraint. Coordination gets harder. And the whole experience of the system depends on a lot more than whether the model can generate a smart answer.

I wanted a way to test some of my questions directly instead of thinking about them in the abstract. OpenClaw gave me that.

This is a first pass at what I've been working on.

A local proving ground

At Outshift, a lot of our work focuses on where agent systems are heading before most organizations are really there yet.

We published our Internet of Agents thesis before multi-agent systems became a normal part of the conversation, and that thinking shaped a lot of what came after, including HAX, our work with AGNTCY, and broader questions around how agents discover each other, exchange context, and operate across systems.

That made this kind of hands-on experimentation especially useful, because it's a practical way to work through some of those issues before they show up more fully in enterprise settings.

Where it broke

The first thing OpenClaw made obvious was how quickly these systems start to fall apart when memory and context are weak.

Longer threads became unreliable. The agent would lose track of what we had been doing. Important details would get compressed away or dropped entirely. What was supposed to feel persistent often felt fragile.

The OpenClaw project has improved a lot since then. But in the version I started with, the gaps were significant enough that it was hard to use seriously.

That ended up being the useful part, because the failures pointed straight at the real issue. Once agents need to operate over time, the challenge is no longer just getting a good answer in the moment. It becomes a systems problem. What should an agent remember? What should it summarize? What needs to remain available in full detail? What can it safely act on? How do you preserve continuity without letting the whole thing drift off course?

Those are local problems when you are running one setup on a Mac mini. They are also enterprise problems once agent behavior becomes more persistent, more connected, and more operational.

Making it usable

To get OpenClaw into a state where I could actually push it further, I had to spend a lot of time fixing memory and context.

Out of the box, those were the core issues. Once conversations got longer, the system started losing important detail, compressing the wrong things, and dropping enough continuity that trust started to break with it.

So I started changing things.

I built a three-layer memory model: working memory for current tasks and daily notes, episodic memory for session history, and long-term memory for more stable facts, preferences, and reference material. The agent writes to each layer differently and pulls from them differently depending on the situation.

I also implemented LosslessClaw, which let me compress older conversation history into summaries that could still be expanded later when needed. Instead of watching useful context disappear once the window filled up, I could preserve it in a structure that was still accessible.

Then I added a Mac Studio running local models through Ollama. Not because the models were always better. Often they were not. But they gave me more room to work. No API limits, no constant concern about running out of context halfway through a task, and more freedom to let longer workflows play out.

None of this was especially elegant. It was mostly trial and error, frustration, tweaking, and trying again. But once memory and context became more reliable, the system stopped feeling like a fragile demo and started feeling like something I could learn from.

Moving from one agent to many

The more interesting experiments started once I moved beyond a single assistant.

This connected directly to another area we have been working on at Outshift: the Internet of Cognition. The question there is not just whether agents can send messages to each other, but whether they can build enough shared understanding to align on goals, interpret context in similar ways, and produce something more coherent than a chain of handoffs.

To test that, I set up a separate OpenClaw environment as a simulated consulting agency. Seven specialized agents, each with a distinct role and point of view. I ran mock client engagements through the system: intake, framing, strategy, deliverables. Each agent built up its own memory from the vantage point of its role.

Then I pushed on the part that interested me most. What happens when those agents need to align on a recommendation? What carries over cleanly? What gets distorted? Where does coordination start to crack even when each agent seems competent on its own?

That work deserves its own article, but one thing became clear quickly: message passing is not the same as shared understanding. A group of agents can look coordinated on the surface while still operating from different assumptions, incomplete context, or slightly different goals.

That gap sits close to the center of the Internet of Agents work we have been doing. Getting agents to connect, discover each other, and exchange messages matters. But connection alone does not create shared context, aligned intent, or anything we should confuse with real collaboration.

What changed for me

What changed for me most was how quickly memory and context moved from being infrastructure concerns to being the foundation of the experience itself.

A strong model inside a weak memory system still produces a weak experience. An agent that cannot reliably retain, retrieve, or reconstruct the right context is like working on a computer that keeps losing your files.

That also reinforced something I care a lot about as a design leader: human-agent collaboration is not just a technical problem. It is a design problem. When should an agent act on its own? When should it ask? How should it show what it remembered, what it inferred, or why it made a recommendation? How do people correct it without having to rebuild everything from scratch?

The other thing this changed for me is how I think about local experimentation. Running agents on a Mac is obviously not the same as deploying them across an enterprise, but these experiments are not toy versions of the real problem. The same categories of failure show up early: handoff issues, brittle tool use, trust breakdowns, memory gaps, coordination drift, and the slow erosion of continuity once the system starts losing the thread.

And really, some things you only really learn by building. Reading papers helps. Following the space helps. Talking to engineers helps. But there is a difference between hearing that multi-agent systems are hard and watching one fail in a way that exposes the exact assumption you got wrong.

That is what makes this kind of work useful. It compresses the learning. You get a smaller environment where the structural problems show up faster.

What’s next

I’m still in the middle of this work.

Some of the changes I made have made these systems more useful. Some have mostly made the weak spots easier to see. The value of working with a local agent framework like OpenClaw was how quickly it turned abstract questions into concrete ones. Memory, context, coordination, trust. They stopped being ideas and started becoming things I had to deal with directly.

I’ll write more soon about the multi-agent consulting experiment, the move toward the Internet of Cognition, and what these local systems might reveal as enterprises move from isolated AI features to more persistent and connected agent behavior. Once agents start operating over time, the question isn't about whether they can produce a good answer. It's whether the system around them is strong enough to keep that answer connected to context, continuity, and human trust.

So, that gives us a world where AI agents can discover and authenticate one another, share complex information securely, and adapt to uncertainty while collaborating across different domains. And users will be working with agents that will pursue complex goals with limited direct supervision, acting autonomously on behalf of them.

As a design team, we are actively shaping how we navigate this transformation. And one key question keeps emerging: How do we design AI experiences that empower human-machine teams, rather than just automate them?

The Agentic Teammate: Enhancing Knowledge Work

In this new world, AI agents become our teammates, offering powerful capabilities:

Knowledge Synthesis: Agents aggregate and analyze data from multiple sources, offering fresh perspectives on problems.

Scenario Simulation: Agents can create hypothetical scenarios and test them in a virtual environment, allowing knowledge workers to experiment and assess risks.

Constructive Feedback: Agents critically evaluate human-proposed solutions, identifying flaws and offering constructive feedback.

Collaboration Orchestration: Agents work with other agents to tackle complex problems, acting as orchestrators of a broader agentic ecosystem.

So, that gives us a world where AI agents can discover and authenticate one another, share complex information securely, and adapt to uncertainty while collaborating across different domains. And users will be working with agents that will pursue complex goals with limited direct supervision, acting autonomously on behalf of them.

As a design team, we are actively shaping how we navigate this transformation. And one key question keeps emerging: How do we design AI experiences that empower human-machine teams, rather than just automate them?

The Agentic Teammate: Enhancing Knowledge Work

In this new world, AI agents become our teammates, offering powerful capabilities:

Knowledge Synthesis: Agents aggregate and analyze data from multiple sources, offering fresh perspectives on problems.

Scenario Simulation: Agents can create hypothetical scenarios and test them in a virtual environment, allowing knowledge workers to experiment and assess risks.

Constructive Feedback: Agents critically evaluate human-proposed solutions, identifying flaws and offering constructive feedback.

Collaboration Orchestration: Agents work with other agents to tackle complex problems, acting as orchestrators of a broader agentic ecosystem.

Addressing the Challenges: Gaps in Human-Agent Collaboration

All this autonomous help is great, sure – but it's not without its challenges.

Autonomous agents have fundamental gaps that we need to address to ensure successful collaboration:

So, that gives us a world where AI agents can discover and authenticate one another, share complex information securely, and adapt to uncertainty while collaborating across different domains. And users will be working with agents that will pursue complex goals with limited direct supervision, acting autonomously on behalf of them.

As a design team, we are actively shaping how we navigate this transformation. And one key question keeps emerging: How do we design AI experiences that empower human-machine teams, rather than just automate them?

The Agentic Teammate: Enhancing Knowledge Work

In this new world, AI agents become our teammates, offering powerful capabilities:

Knowledge Synthesis: Agents aggregate and analyze data from multiple sources, offering fresh perspectives on problems.

Scenario Simulation: Agents can create hypothetical scenarios and test them in a virtual environment, allowing knowledge workers to experiment and assess risks.

Constructive Feedback: Agents critically evaluate human-proposed solutions, identifying flaws and offering constructive feedback.

Collaboration Orchestration: Agents work with other agents to tackle complex problems, acting as orchestrators of a broader agentic ecosystem.

Probabilistic Operations

AI agents work with probabilities, leading to inconsistent outcomes and misinterpretations of intent.

Trust Over Time

Humans tend to trust AI teammates less than human teammates, making it crucial to build that trust over time.

Gaps in Contextual Understanding

AI agents often share raw data instead of contextual states, and may miss human nuances like team dynamics and intuition.

Challenges in Mental Models

Evolving AI systems can be difficult for humans to understand and keep up with, as the AI's logic may not align with human mental models.

The Solution:

Five Design Principles for Human-Agent Collaboration

Put Humans in the Driver's Seat

Users should always have the final say, with clear boundaries and intuitive controls to adjust agent behavior. An example of this is Google Photos' Memories feature which allows users to customize their slideshows and turn the feature off completely.

Make the Invisible Visible

The AI's reasoning and decision-making processes should be transparent and easy to understand, with confidence levels or uncertainty displayed to set realistic expectations. North Face's AI shopping assistant exemplifies this by guiding users through a conversational process and providing clear recommendations.

Ensure Accountability

Anticipate edge cases to provide clear recovery steps, while empowering users to verify and adjust AI outcomes when needed. ServiceNow's Now Assist AI is designed to allow customer support staff to easily verify and adjust AI-generated insights and recommendations.

Collaborate, Don't Just Automate

Prioritize workflows that integrate human and AI capabilities, designing intuitive handoffs to ensure smooth collaboration. Aisera HR Agents demonstrate this by assisting with employee inquiries while escalating complex issues to human HR professionals.

Earn Trust Through Consistency:

Build trust gradually with reliable results in low-risk use cases, making reasoning and actions transparent. ServiceNow's Case Summarization tool is an example of using AI in a low-risk scenario to gradually build user trust in the system's capabilities.

Empowering Users with Control

Establishing clear boundaries for AI Agents to ensure they operate within a well-defined scope.

Designing Tomorrow's Human-Agent Collaboration At Outshift

These principles are the foundation for building effective partnerships between humans and AI at Outshift.

Building Confidence Through Clarity

Surface AI reasoning, displaying: Confidence Levels, realistic expectations, and the extent of changes to enable informed decision-making.

Always Try To Amplify Human Potential

Actively collaborate through simulations and come to an effective outcome together.

Let Users Stay In Control When It Matters

Easy access to detailed logs and performance metrics for every agent action, enabling the review of decisions, workflows, and ensure compliance. Include clear recovery steps for seamless continuity.

Take It One Interaction at a Time

See agent actions in context and observe agent performance in network improvement.

Addressing the Challenges: Gaps in Human-Agent Collaboration

All this autonomous help is great, sure – but it's not without its challenges.

Autonomous agents have fundamental gaps that we need to address to ensure successful collaboration:

Addressing the Challenges: Gaps in Human-Agent Collaboration

All this autonomous help is great, sure – but it's not without its challenges.

Autonomous agents have fundamental gaps that we need to address to ensure successful collaboration:

The Solution:

Five Design Principles for Human-Agent Collaboration

What to Consider:

Five Design Principles for Human-Agent Collaboration

Put Humans in the Driver's Seat

Users should always have the final say, with clear boundaries and intuitive controls to adjust agent behavior. An example of this is Google Photos' Memories feature which allows users to customize their slideshows and turn the feature off completely.

Make the Invisible Visible

The AI's reasoning and decision-making processes should be transparent and easy to understand, with confidence levels or uncertainty displayed to set realistic expectations. North Face's AI shopping assistant exemplifies this by guiding users through a conversational process and providing clear recommendations.

Ensure Accountability

Ensure Accountability

Anticipate edge cases to provide clear recovery steps, while empowering users to verify and adjust AI outcomes when needed. ServiceNow's Now Assist AI is designed to allow customer support staff to easily verify and adjust AI-generated insights and recommendations.

Collaborate, Don't Just Automate

Prioritize workflows that integrate human and AI capabilities, designing intuitive handoffs to ensure smooth collaboration. Aisera HR Agents demonstrate this by assisting with employee inquiries while escalating complex issues to human HR professionals.

Earn Trust Through Consistency:

Build trust gradually with reliable results in low-risk use cases, making reasoning and actions transparent. ServiceNow's Case Summarization tool is an example of using AI in a low-risk scenario to gradually build user trust in the system's capabilities.

Designing Tomorrow's Human-Agent Collaboration At Outshift

These principles are the foundation for building effective partnerships between humans and AI at Outshift.

Empowering Users with Control

Establishing clear boundaries for AI Agents to ensure they operate within a well-defined scope.

Building Confidence Through Clarity

Surface AI reasoning, displaying:

Confidence levels

Realistic Expectations

Extent of changes to enable informed decision-making

Addressing the Challenges: Gaps in Human-Agent Collaboration

All this autonomous help is great, sure – but it's not without its challenges.

Autonomous agents have fundamental gaps that we need to address to ensure successful collaboration:

The Solution:

Five Design Principles for Human-Agent Collaboration

What to Consider:

Five Design Principles for Human-Agent Collaboration

Put Humans in the Driver's Seat

Users should always have the final say, with clear boundaries and intuitive controls to adjust agent behavior. An example of this is Google Photos' Memories feature which allows users to customize their slideshows and turn the feature off completely.

Make the Invisible Visible

The AI's reasoning and decision-making processes should be transparent and easy to understand, with confidence levels or uncertainty displayed to set realistic expectations. North Face's AI shopping assistant exemplifies this by guiding users through a conversational process and providing clear recommendations.

Ensure Accountability

Ensure Accountability

Anticipate edge cases to provide clear recovery steps, while empowering users to verify and adjust AI outcomes when needed. ServiceNow's Now Assist AI is designed to allow customer support staff to easily verify and adjust AI-generated insights and recommendations.

Collaborate, Don't Just Automate

Prioritize workflows that integrate human and AI capabilities, designing intuitive handoffs to ensure smooth collaboration. Aisera HR Agents demonstrate this by assisting with employee inquiries while escalating complex issues to human HR professionals.

Earn Trust Through Consistency:

Build trust gradually with reliable results in low-risk use cases, making reasoning and actions transparent. ServiceNow's Case Summarization tool is an example of using AI in a low-risk scenario to gradually build user trust in the system's capabilities.

Always Try To Amplify Human Potential

Actively collaborate through simulations and come to an effective outcome together.

Let Users Stay In Control When It Matters

Easy access to detailed logs and performance metrics for every agent action, enabling the review of decisions, workflows, and ensure compliance. Include clear recovery steps for seamless continuity.

Designing Tomorrow's Human-Agent Collaboration At Outshift

These principles are the foundation for building effective partnerships between humans and AI at Outshift.

Take It One Interaction At A Time

See agent actions in context and observe agent performance in network improvement.

As we refine our design principles and push the boundaries of innovation, integrating advanced AI capabilities comes with a critical responsibility. For AI to become a trusted collaborator—rather than just a tool—we must design with transparency, clear guardrails, and a focus on building trust. Ensuring AI agents operate with accountability and adaptability will be key to fostering effective human-agent collaboration. By designing with intention, we can shape a future where AI not only enhances workflows and decision-making but also empowers human potential in ways that are ethical, reliable, and transformative.

Because in the end, the success of AI won’t be measured by its autonomy alone—but by how well it works with us to create something greater than either humans or machines could achieve alone.

Designing Tomorrow's Human-Agent Collaboration At Outshift

These principles are the foundation for building effective partnerships between humans and AI at Outshift.

Content